AI Music is Fast Food

It learns from the greatest. It produces the average. And it has no culture.

In 2004 I gave a talk to colleagues in Melbourne arguing that every new technology that disrupts copyright is good for art.

I had the receipts. The piano roll did not destroy music, it created the modern music publishing industry. Radio did not destroy live performance, it built the first national audience for popular music. The VCR did not destroy Hollywood, it doubled its revenue. Napster did not destroy the record business. Streaming did, and the industry streaming built is now generating more revenue than the CD ever did, even if the way that revenue reaches artists is a fight that is not yet over.

Every time a new machine threatened to swallow music, the panic was the same. In 1906 the composer John Philip Sousa told Congress that talking machines were going to ruin the artistic development of music in America, that the human vocal cord would be eliminated by evolution as the tail had been when man came down from the ape. Congress did not ban the talking machine. They created compulsory licensing instead. A thousand times more music got made, by a thousand times more artists, reaching a thousand times more people.

I should say at this point that I lead a double life. I am also a musician and studio engineer. That means I have a dog in this fight. I have had one for as long as I can remember.

I was right in 2004. I am going to tell you why AI generated music is different.

In 1995 Steve Jobs gave the interview that became known as The Lost Interview. He was talking about Microsoft. He said the problem was not their success, they had earned their success. The problem was that they made third-rate products. The products had no spirit. They had no taste. They did not bring much culture into their products. They were pedestrian. And the saddest part, Jobs said, was that a lot of customers did not have a lot of that spirit either.

Then he landed it. “Microsoft’s just McDonald’s.”

The line about taste is the one people quote. The line that matters is the one about culture. Microsoft’s products did not bring culture into them, because Microsoft did not have culture to bring. They had market share, and a sales engine, and a willingness to ship the thing that was good enough.

AI generated music is the same trade. It is fast food.

The previous waves were distribution technologies. The piano roll reproduced a human composition. The radio broadcast a human performance. The VCR recorded a human program. Napster moved a human recording from one hard drive to another. In every case the human was upstream of the machine. The technology made the human’s work cheaper, faster, more available. It did not replace the human. It carried the human further.

AI generated music is not a distribution technology. It is a replacement technology. It does not carry the artist further. It removes the artist from the equation entirely.

It learns from the greatest. Then it produces the average.

This is not a slur. It is the literal mechanism. A generative model is trained on the best music ever made, every Beatles record, every Bowie record, every record that mattered enough to end up in a training set. It then produces the statistical centre of that distribution. Not the peaks. The mean. The thing that is most like everything else it has heard.

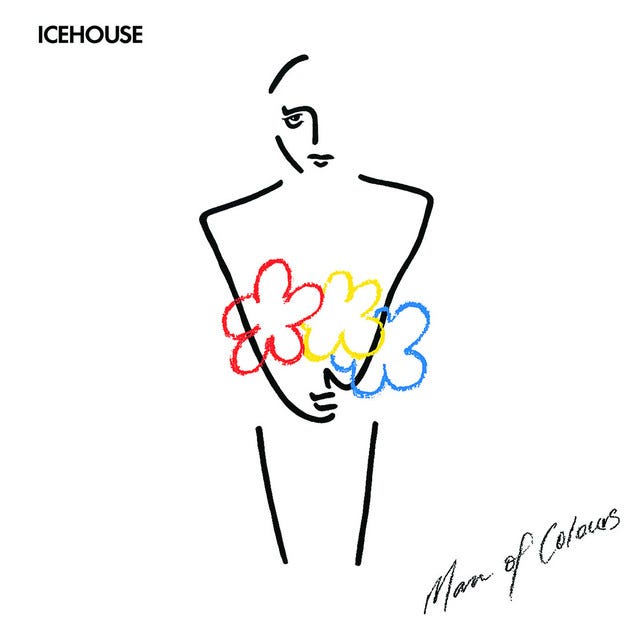

A model will never make ICEHOUSE’s Man of Colours. A model will never make Michael Jacksons Thriller. Iva Davies wrote Man of Colours thinking about a painter and what it cost to live a creative life, and the whole record carries the weight of that. Thriller happened because Michael Jackson and Quincy Jones decided to make the biggest record ever made, and then did, with Eddie Van Halen walking in to play a solo on a pop song nobody else would have asked for. Those records are not points on a distribution. They are decisions. Specific people, specific rooms, specific arguments, specific risks that almost did not pay off. A model has no decisions. It has weights. It can only ever produce what is most likely given everything it has already heard, which is by definition the thing those records were trying to escape.

That is mathematically what these systems do. They cannot do anything else. A model cannot generate Heroes because Heroes is an outlier, and outliers are exactly what these systems are designed to smooth away. AI is Machine Learning. Machine Learning is Statistics.

Sure, what you get back is competent. It is in tune. It is in time. It will not embarrass you. It is also completely interchangeable with everything else it produces, and with everything else everyone else’s model produces, because it is all drawn from the same centre. Statistical frequency.

Jobs said it was about culture, and that is the test AI music fails cleanly. AI music has no culture because there is no one inside it to have a culture. There is no scene it came from, no city it grew up in, no record collection it stole (in a human inspirative context) from, no band it almost joined, no producer it argued with at four in the morning, no girlfriend it lost, no funeral it played, no rent it could not make or guitar it stole. The music has no biography because the thing that made it has no biography.

Music is not just sound. Music is the residue of a life.

In 1976 David Bowie and Iggy Pop moved to Berlin. They were both fleeing something, drugs, fame, themselves. They lived in a flat in Schöneberg, walked to the Hansa studio every day, and made two records each across the next two years. The Idiot and Lust for Life for Iggy. Low and Heroes for Bowie. The Hansa studio was about five hundred metres from the Wall. The control room window looked out at the guard towers. You can hear the city in those records. You can hear the year. You can hear two men trying to put themselves back together in a divided town.

A model trained on every record ever made cannot make Heroes. It can make something that sounds like Heroes from far enough away. But it cannot make Heroes because Heroes required Berlin in 1977, and Bowie, and Iggy, and Eno, and Visconti, and the Wall, and the heroin, and the recovery, and the specific way Robert Fripp played guitar that afternoon. None of those things can be averaged into existence. They had to happen.

Eno had a card in his Oblique Strategies deck that read, “Honour thy error as a hidden intention.” That is what great records are built from. Mistakes someone chose to keep. A model cannot honour an error because a model cannot make one. It can only ever produce what was most likely. The thing that was almost not played, the thing that should not have worked, the thing nobody planned, that is the substance of every record that ever mattered. And it is exactly what these systems cannot reach.

This is not just an old idea. Look at Angine de Poitrine. A masked Quebec duo who went viral earlier this year off a KEXP session, two anonymous musicians in oversized papier-mâché alien heads playing microtonal math rock on a custom double-neck guitar, named after the medical French for the chest pain of a heart attack. Nine million views in two months. Two and a third million monthly listeners on Spotify. Dave Grohl said they blew his mind. Sean Lennon said he had never seen anything like it.

A journalist at Northeastern tried to prompt Suno to make a song in the style of Angine de Poitrine. What came back was, in his words, a far less adventurous kind of progressive rock. The model could not get there and it will never get there. Because Angine de Poitrine is, in the words of one music academic interviewed about them, at least three standard deviations from the norm, and the norm is exactly what these systems were built to return.

That is the whole argument in one band. Two people in alien heads, naming themselves after a heart attack, playing music in tunings the West does not use, and the world cannot stop watching. A statistical engine cannot produce them. It can only flatten them.

That is what culture means. It is not a feature you can add to the model. It is the thing the model was scraping past on its way to the average.

Fast food is fast, cheap, consistent, and available everywhere. It is also nobody’s grandmother’s cooking, and it never will be, and the entire economic logic of the category depends on it never being. A version of fast food that came from somewhere, that was made by someone, that tasted of a person and a place, would not be fast food anymore. It would be a restaurant. AI generated music is built on the same trade. The whole point is that it does not come from anywhere.

The 2004 argument still holds for everything it covered. Distribution technology gives more art to more people. I would make the same case today about streaming, about the open web, about the collapse of gatekeeping in publishing, about every tool that puts a human creator’s work in front of a wider audience.

But the argument was always about technology that carries art. AI generated music is technology that substitutes for art. It is not the next piano roll. It is the first time the machine has tried to be the composer instead of the press.

The piano roll defenestrated the sheet music publisher’s monopoly on distribution. AI music is being pitched as a tool to defenestrate the artist. That is a different building, and a much longer fall.

If we let it land, we will not get a thousand times more music made by a thousand times more artists. We will get a million times more music made by nobody, sounding like everybody, signifying and meaning nothing. The catalogue will double every six months and contain less and less of anything worth hearing. A noise so deafening, that those humans actually making music will be drowned out.

You cannot get culture from a model. You can only get the average of the cultures it ate.

That is what is on the menu. It is fast. It is cheap. It is everywhere.

It is third-rate food.

Ross Woodhams has spent more than twenty five years in enterprise infrastructure and solution architecture, working on self-healing datacentres and large-scale systems for companies such as Avanade, Getronics and HP, and the global enterprise stack. He founded UpscalarAI in 2018, a generative AI video restoration venture built on a strict principle of training only on ethically sourced material. He is the founder and CEO of Audalize, a managed background music services company operating across Australia, the United Kingdom, and New Zealand, building physical environment intelligence for the hospitality industry.